Top 5 AI Design Tools for Architects in 2026

A no-nonsense guide to the AI tools actually worth integrating into your architecture workflow this year.

Three AI coding tools, three fundamentally different philosophies. Here's how to pick the right one for your actual workflow.

LindleyLabs Editorial

2026-04-25

Three tools. Three completely different ideas about what "AI-assisted coding" should mean. One is a terminal agent that reads your entire codebase and thinks before it types. One is a polished IDE that makes AI feel like autocomplete on steroids. One hands you a PR while you go make coffee. Choosing wrong costs you hours daily.

Before benchmarks, features, or pricing — understand what you're actually comparing. These tools aren't competing on the same axis.

Claude Code is a terminal-first agentic coding tool built by Anthropic. You install it, point it at a project, and it operates directly on your local files. It runs real shell commands, edits files, executes tests, and shows you diffs before applying changes. The key differentiator: it runs with your full dev environment — local databases, private APIs, environment-specific tooling — and holds your entire codebase in context. As of 2026, that context window is 1 million tokens.

Cursor is an IDE. Specifically, it's a VS Code fork rebuilt around AI-native workflows. You get Tab autocomplete, Composer for multi-file edits, and an agent mode — but the core experience is visual, interactive, and familiar. Importantly, Cursor is model-agnostic: you can run Claude Sonnet through it, GPT-5 through it, or whatever ships next week. That flexibility is genuinely useful and occasionally confusing.

OpenAI Codex (not the 2021 completion API — that's dead) is a cloud-based autonomous agent integrated into ChatGPT and OpenAI's platform. You describe a task. Codex spins up a sandboxed virtual machine, clones your repository, works independently, and opens a pull request when done. You can walk away. It's the only one of the three that genuinely means it when it says "fire and forget."

That architectural split — local terminal agent vs. AI-native IDE vs. cloud sandbox agent — explains most of the tradeoffs you'll encounter. Everything else is details.

On SWE-bench Verified (real-world bug fixing from GitHub issues), Claude Code leads the pack at 80.9%. Codex sits close behind at approximately 80%. Both are meaningfully production-grade. Cursor's performance depends entirely on which model you select — run Claude through it and you get Claude-level results; run GPT-5 and you get GPT-5-level results.

Token efficiency tells a different story. Independent testing found that Claude Code completed a benchmark task using 33,000 tokens with zero errors. Cursor used roughly 180,000 tokens for the same work — about 5.5x more. Codex is reportedly even more efficient for batch workloads, which matters when you're running dozens of background tasks.

What benchmarks don't capture: the coherence of long task chains. Claude Code builds context across a session in a way that feels qualitatively different. Start with "add authentication," continue to "add rate limiting" three steps later, and Claude Code remembers the architectural decisions it made in step one. Codex executes individual steps cleanly but doesn't carry the same through-line.

Complex refactoring and architectural work. The 1M token context means Claude Code can hold your entire codebase in working memory — not just the file you have open. This shows up clearly on tasks like cross-repo refactoring, debugging that requires tracing state across ten files, and any work where the answer depends on understanding the whole system.

The extensibility story is also strongest here. Claude Code reads CLAUDE.md, a persistent project instruction file that travels with every session. Describe your architecture, your forbidden patterns, your testing conventions once — and the agent carries it indefinitely. Skills, hooks, and custom slash commands let you automate recurring tasks at a level neither Cursor nor Codex matches.

MCP integration is another meaningful differentiator. Claude Code is the reference implementation for Anthropic's Model Context Protocol — connect a GitHub MCP, a Postgres MCP, and a Slack MCP and the agent operates across your actual stack without glue code.

The market is voting: Claude Code authored roughly 4% of all public GitHub commits in March 2026. A February survey of 906 professional engineers put it at 46% "most-loved" — no other coding agent broke 25%.

Daily interactive development. If you're building features, making incremental edits, and want to see what the AI is doing as it does it — Cursor's visual diff interface, real-time autocomplete, and familiar VS Code environment are genuinely hard to beat. The tool you use 8 hours a day should feel good to use. Cursor does.

Model flexibility is underrated. The ability to switch between Claude, GPT, and other models per task — without changing tools — lets you optimize for both quality and cost on the fly. Cursor Pro's flat $20/month also looks attractive compared to Claude Code's API-based pricing, which can push $40–$80 on a heavy day.

The learning curve is essentially zero if you already live in VS Code. That matters for teams onboarding new members or for founders who aren't professional developers.

Asynchronous, background work. This is Codex's genuine architectural advantage — you can assign it a task, close your laptop, and find a PR waiting for you. Cursor and Claude Code require you to be present and watching. Codex does not. For routine feature additions, test generation, documentation updates, and PR review feedback, this async model is exactly right.

GitHub integration is the tightest of the three. If your team's entire workflow revolves around pull requests, Codex slots in naturally — it speaks that language natively. The 3 million weekly active users as of April 2026 suggest this isn't a niche workflow.

Pricing at the platform level also works in its favor. A $20/month ChatGPT Plus subscription bundles Codex alongside image generation and browsing — not just a coding tool.

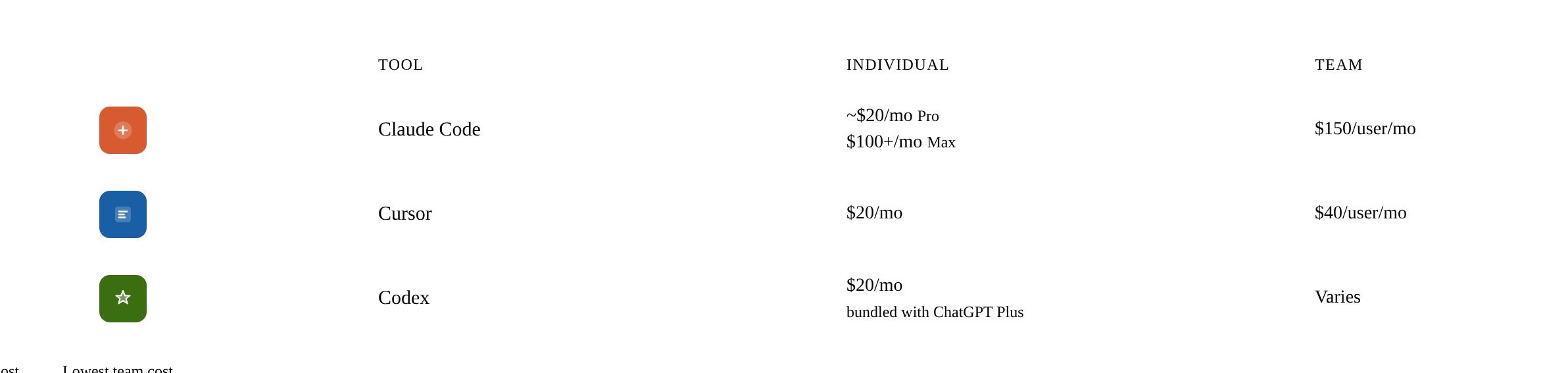

The team pricing gap is significant: Cursor Teams at $40/user/month versus Claude Code Teams at $150/user/month. For a ten-person engineering team, that's $13,200/year more for Claude Code. Whether that's worth it depends on what you're using it for.

One honest note on Claude Code cost: Opus 4.6 runs at meaningful per-token rates. A real working session involving complex refactoring can exceed $50 in API usage. Max mode subscription pricing smooths this out but at a higher floor.

Stop asking "which is best" and ask "which fits how I actually work."

Use Claude Code when: you're doing complex reasoning work — large-scale refactoring, architectural analysis, debugging multi-file state, or anything where context depth matters more than speed. Also use it when you want deep configuration control and persistent project memory.

Use Cursor when: interactive daily development is your primary mode — writing new features, making incremental changes, and wanting to see AI suggestions in real time inside a familiar IDE. Also the right pick if your team has mixed technical levels or you want model flexibility without switching tools.

Use Codex when: your work is task-oriented and parallelizable. Assign background jobs — test generation, documentation, routine feature additions — and let Codex work while you do something else. Particularly well-suited to teams already organized around PR-based workflows.

The winning combination, per most senior practitioners: Claude Code for architecture and complex refactoring, Cursor for daily feature work, and Codex for background tasks and PR automation. These tools are increasingly designed to complement rather than replace each other — OpenAI even shipped an official Codex plugin that runs inside Claude Code in April 2026.

Tags: claude-code, cursor, codex, ai-coding, developer-tools

// RELATED ARTICLES

A no-nonsense guide to the AI tools actually worth integrating into your architecture workflow this year.

Bigger isn't the only lever. The real competitive edge in AI right now is how you extract disproportionate value from a fixed model.

Claude 4.x takes you literally. Here's how to use that to your advantage instead of fighting it.